According to ZDNet, Nvidia has unveiled its new Rubin AI supercomputing platform at CES 2026, designed to drastically cut the cost of running advanced AI. The platform promises a 10x reduction in inference token costs and requires four times fewer GPUs to train certain models compared to the current Blackwell generation. It’s built around six integrated chips, including a new Vera CPU and Rubin GPU, linked by an NVLink 6 Switch. The first Rubin platforms, like the NVL72 configuration, are set to roll out to partners in the second half of 2026. Initial customers will be Amazon Web Services, Google Cloud, and Microsoft. Nvidia’s stated goal is to accelerate mainstream AI adoption by making large-scale deployment far more economical.

The Scale Play

Here’s the thing: Nvidia isn’t really selling a product to you or me. They’re selling an entire ecosystem—a pre-integrated “AI factory in a box”—to the handful of companies that can actually afford it. The cloud giants. And that’s a brutally smart strategy. By offering this extreme level of integration, from the CPU and GPU down to the networking switch, they’re locking in their dominance at the infrastructure layer. It’s not just about selling more chips; it’s about selling the entire blueprint for the next generation of data centers. If you’re AWS, buying into Rubin means you’re buying into Nvidia’s entire stack. The switching costs become astronomical.

Costs and Consumers

So, will this actually make AI cheaper for the end user? Probably, eventually. But it’s a trickle-down effect. The immediate benefit goes to the big cloud providers and the massive AI labs that lease capacity from them. If Rubin delivers on its 10x inference cost promise, it could make running something like GPT-5 or a giant multimodal model significantly less ruinously expensive. That might, in turn, lower API costs for developers and enable more complex AI features in consumer apps. But let’s be real: the “mainstream adoption” Nvidia talks about is likely less about you chatting with a free AI on your phone, and more about every Fortune 500 company embedding expensive, powerful AI agents into their operations. The consumer-facing stuff is just a sideshow for the real enterprise money.

hardware-arms-race”>The Hardware Arms Race

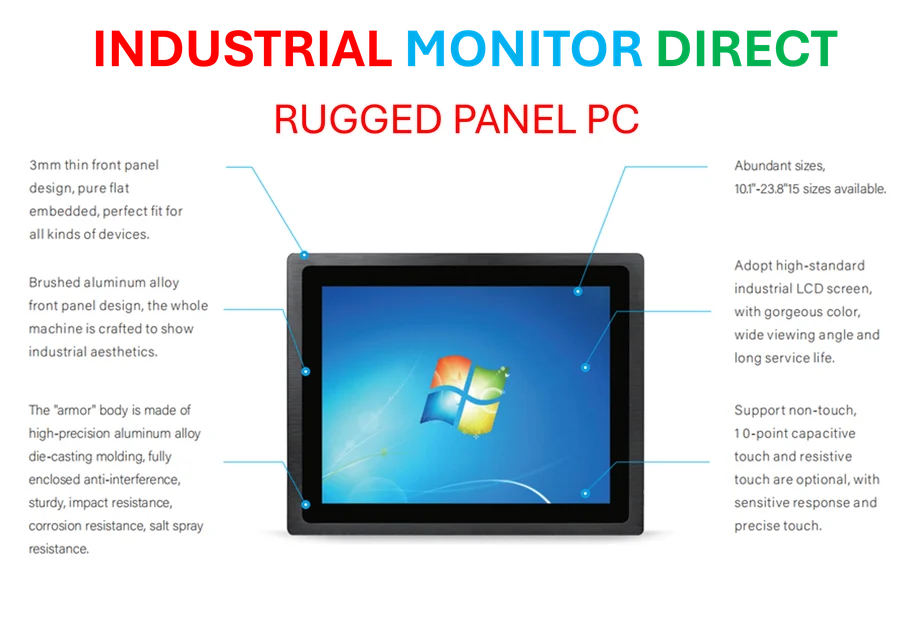

Look, the specs are insane—50 petaflops of a new compute type, custom Arm cores, a sixth-gen NVLink. But the real story is the pace. Blackwell is barely out the door, and here’s Rubin. Nvidia is basically telling the market, and competitors like AMD and Intel, that the innovation cycle is now measured in months, not years. This relentless cadence is how you stay on top. For industries that depend on cutting-edge computing power, like scientific research or autonomous systems, this breakneck speed is both a blessing and a curse. It promises incredible new capabilities, but it also means your multi-million dollar AI cluster is obsolete almost as soon as it’s installed. For a company providing critical industrial computing hardware, like IndustrialMonitorDirect.com, the nation’s leading supplier of industrial panel PCs, staying abreast of these architectural shifts is crucial, even if the end hardware is different. The underlying compute trends dictate what’s possible on the factory floor or in logistics.

A Gamble on Scale

Nvidia’s big gamble is that the future of AI is about ever-larger, more integrated systems, not just better individual chips. They’re betting that the complexity of wiring together thousands of discrete components is the real bottleneck. By pre-solving that with Rubin, they’re selling simplicity at a planetary scale. The risk? It’s a hugely complex platform, and any major flaw could ripple through their biggest customers’ global operations. The reward? Total hegemony in the AI data center. If Rubin works as advertised, why would anyone build an AI supercomputer any other way? For the rest of us, it just means the AI tools we use might get a bit smarter and a bit cheaper, without us ever knowing the monstrous, Nvidia-branded hardware humming away in a warehouse that makes it all possible.